News

CARLA AD Challenge 2025

We are sorry to announce that the CARLA Autonomus Driving Challenge will unfortunately not be running during 2025 due to unforseen circumstances but we still encourage everyone to keep using the CARLA AD Leaderboard locally.

CARLA AD Leaderboard 2.1

Starting on March 2025, the CARLA AD Leaderboard will be updated to the new version 2.1.

This new version comes with significant modifications to the infraction score. As a result, the infraction scores will increase overall, so it is not recommended to compare the results between the new Leaderboard 2.1 and the Leaderboard 2.0.

Participants of the CARLA AD Leaderboard have figured out over time that an optimal approach to achieving a high score isn’t to attempt completion of the full route, but instead to trigger the simulation to end once the autonomy stack has completed only a certain percentage of the route. The advantage of this early stopping strategy is due to the exponential nature of the infraction score, meaning that continuing to move risks higher penalties than staying still.

These types of strategies go against the spirit of the leaderboard, as the participants’ aim is no longer to complete the route and improve the AV stack, but to find out the best possible moment to stop, maximizing the scores. We’ve changed the exponential nature of the infraction score onto a linear one so that the early stopping strategy will no longer be valid.

The exact metrics are available in the Evaluate section and also note that the routes used for training, validation and testing, along with the scenarios within them haven’t changed.

Overview

The main goal of the CARLA Autonomous Driving Leaderboard is to evaluate the driving proficiency of autonomous agents in realistic traffic scenarios. The leaderboard serves as an open platform for the community to perform fair and reproducible evaluations of autonomous vehicle agents, simplifying the comparison between different approaches. Leaderboard is currently at version 2.1, but older versions are still supported.

Index

Task

The CARLA AD Leaderboard challenges AD agents to drive through a set of predefined routes. For each route, agents will be initialized at a starting point and directed to drive to a destination point, provided with a description of the route through GPS style coordinates, map coordinates or route instructions. Routes are defined in a variety of situations, including freeways, urban areas, residential districts and rural settings. The Leaderboard evaluates AD agents in a variety of weather conditions, including daylight scenes, sunset, rain, fog, and night, among others.

Scenarios

Agents will face multiple traffic scenarios based on the NHTSA typology. The full list of traffic scenarios can be reviewed in this page, but here are some examples.

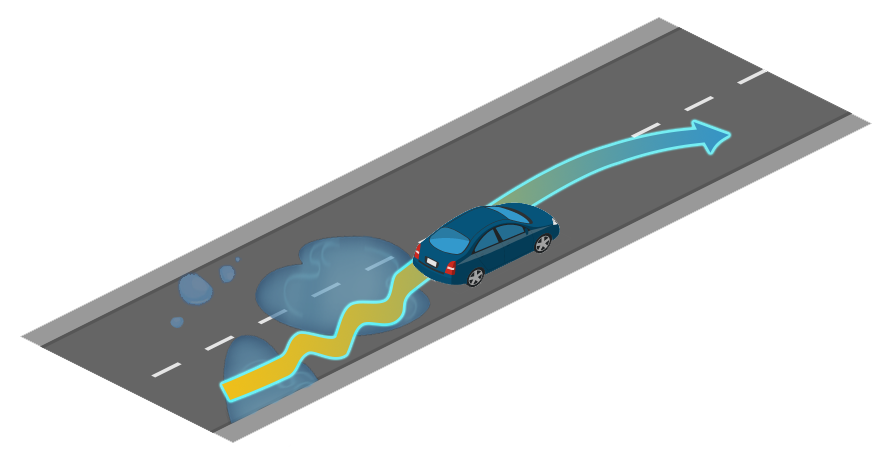

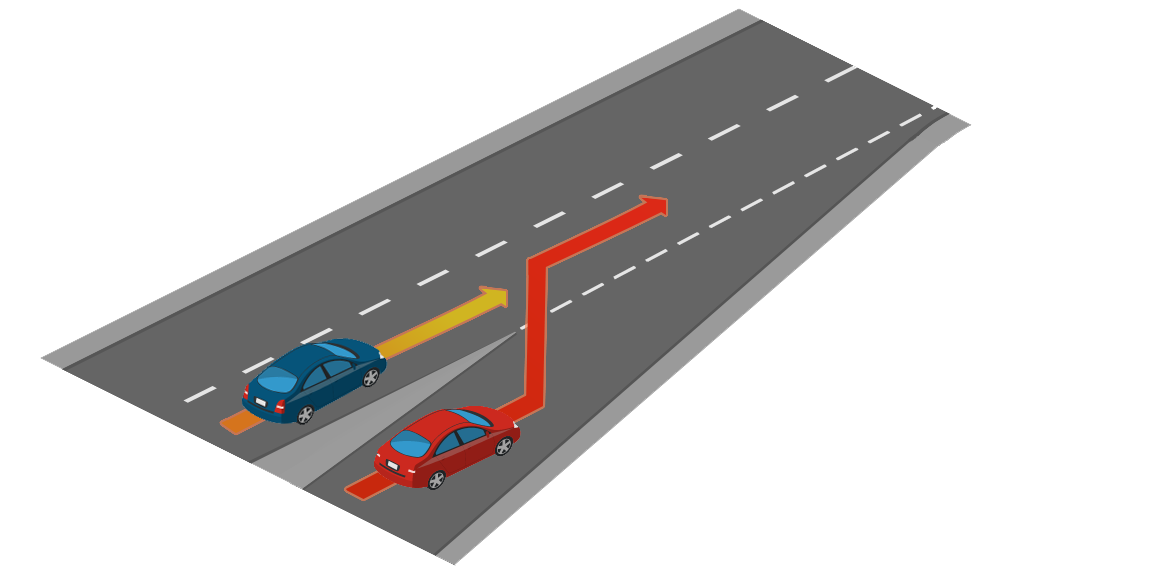

- Lane merging.

- Lane changing.

- Negotiations at traffic intersections.

- Negotiations at roundabouts.

- Handling traffic lights and traffic signs.

- Yielding to emergency vehicles.

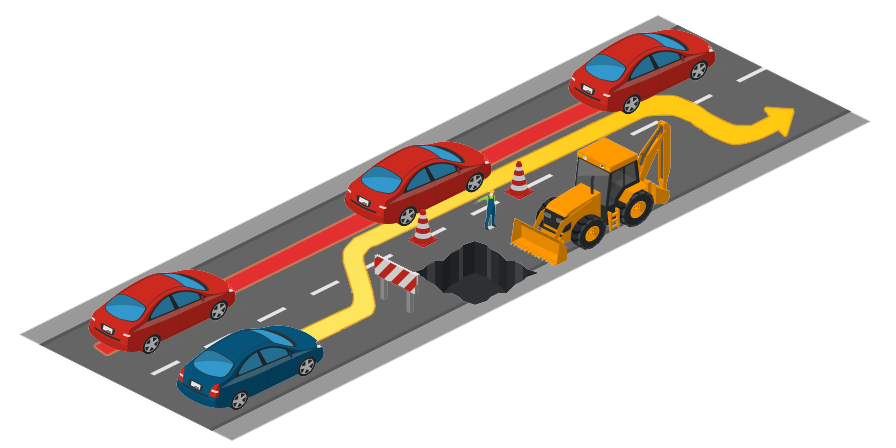

- Coping with pedestrians, cyclists, and other elements.

Get started

For more details about the different modalities and metrics check the Evaluation section.

In order to get familiar with the leaderboard we recommend you read carefully through the Get started section. Please, spend enough time making sure everything works as expected locally.

Once you are ready, check the Submit section to learn how to prepare your submission.

Older versions

While the Leaderboard is current at version 2.1, older versions are still available to support your previous work.

Leaderboard 2.0

For everything related to the Leaderboard 2.0 version, follow the links below:

Leaderboard 1.0

For everything related to the Leaderboard 1.0 version, follow the links below:

Terms and Conditions

The CARLA Autonomous Driving Leaderboard is offered for free as a service to the research community thanks to the generosity of our sponsors and collaborators.

Each submission will be evaluated in AWS using a g5.12xlarge instance. This gives users access to a dedicated node with a modern GPU and CPU.

Teams are provided a finite number of submissions (currently 20 submissions) for a given a month.

Submission allowance is automatically refilled every month. The organizers of the CARLA leaderboard reserve the right to assign additional allowances to a team. The organization also reserves the right to modify the default values of the monthly allowance for submissions.

It is strictly prohibited to misuse or attack the infrastructure of the CARLA leaderboard, including all software and hardware that is used to run the service. Actions that deviate from the spirit of the CARLA leaderboard could result in the termination of a team.

For further instructions, please read the terms and conditions.